Cybersecurity Risks in Multi-Agent AI Systems: Defending Against Deepfake Attacks

Explore emerging cybersecurity threats in multi-agent AI and proven strategies to secure autonomous systems, essential for software engineers building AI infrastructure.

Cybersecurity Risks in Multi-Agent AI Systems: Defending Against Deepfake Attacks

Multi-agent AI systems are shaking up artificial intelligence and software engineering. But cybersecurity risks, especially deepfake attacks, threaten to derail them. One breach could cost enterprises big time. Deepfakes are everywhere now, and their rise means more headaches for anyone building these systems.

Software engineers and cybersecurity folks, this guide breaks down multi-agent AI vulnerabilities. You'll see how deepfake attacks work and pick up practical ways to defend your setups. Let's build AI that's tough against tricks like these.

What Are Multi-Agent AI Systems, Anyway?

Picture a team of smart AI agents working together. Each one tackles a specific job, like crunching data or making decisions. They chat through set protocols to hit shared goals. In machine learning and software engineering, this teamwork scales up operations and speeds things along. Agents split tasks on the fly, handling huge datasets way quicker than a lone model could.

Think about self-driving car fleets coordinating traffic or dodging obstacles. Or trading bots juggling strategies across markets in real time. These setups push automation in the tech industry. But that connectivity? It means trouble in one spot spreads fast. Software engineers need to bake security in from day one. These systems thrive in changing environments, but only if you guard against threats.

The Biggest Cybersecurity Risks in Multi-Agent AI Setups

Attackers love the weak spots in multi-agent environments. They hit at scale. Inter-agent chats can get intercepted or tweaked, messing up coordination. Data poisoning sneaks bad inputs into shared machine learning models, slowly poisoning the whole system. Impersonation lets bad guys take over and send fake orders. And as you add more agents, the attack surface explodes.

Large language models power many agents, and they bring extra headaches. Prompt injections trick them easily. Backdoors in retrieval systems open doors wide. Trust between agents? That's a huge risk, one bad apple spoils the bunch. Security leaders worry a lot about this. Without fixes, everyday ops become open invitations for breaches.

How Deepfake Attacks Pull One Over on Multi-Agent AI

Deepfakes whip up fake audio, video, or images that fool AI's senses. In a multi-agent world, crooks mimic trusted voices or faces to hijack calls. Imagine a fake boss voice telling agents to do something dumb.

One fake chat can spark chaos across the network. Deepfake tools have gotten scarily good, cheap, and common. Cybercriminals use them for scams and worse. Picture a deepfake video faking sensor feeds in autonomous fleets, leading to pileups. In finance, it could trigger bad trades. We've seen AI-fueled hacks steal massive data troves from governments. Machine learning checks often miss the mark without solid liveness tests or deep biometrics. That's how deepfakes turn teamwork into a weakness.

Smart Ways to Fight Back Against Deepfakes in AI

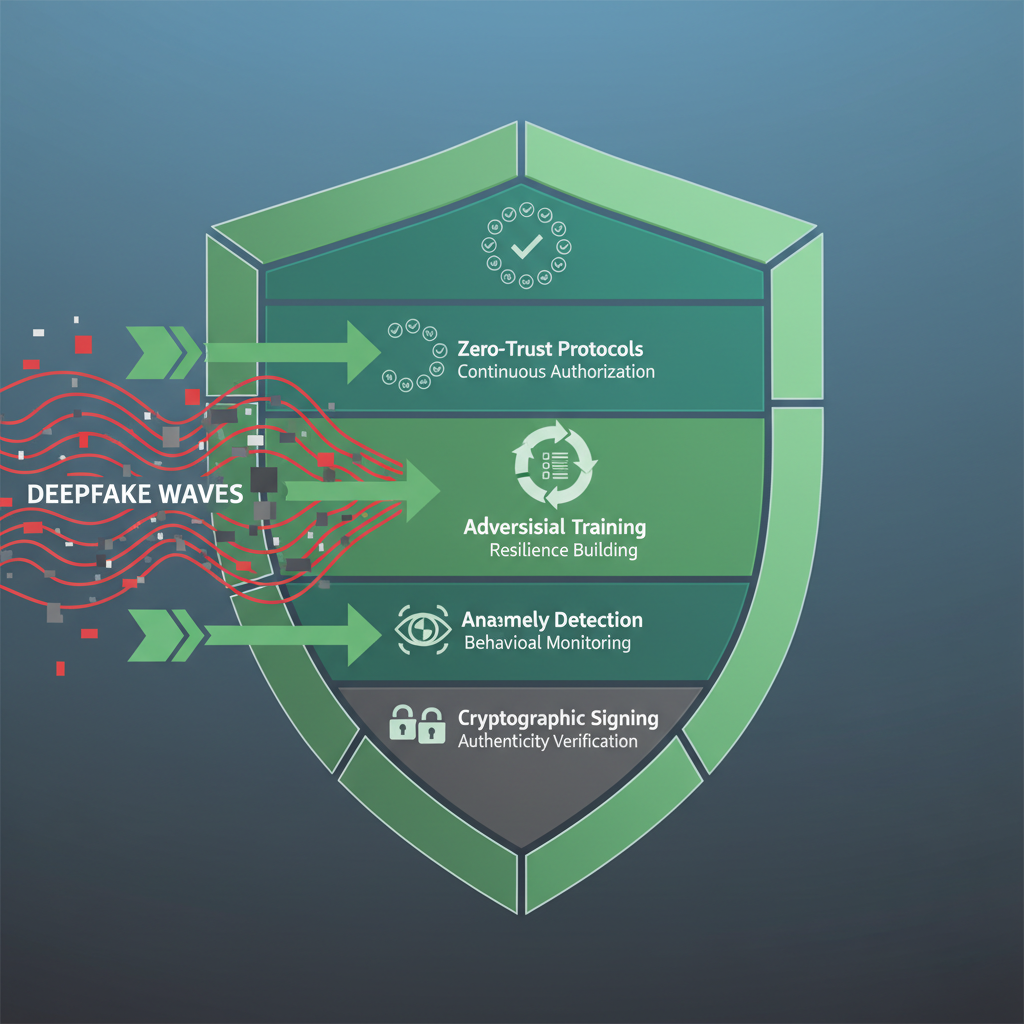

Layer up your defenses to rebuild trust. Start with multi-factor auth for agents: crypto signatures on messages plus behavior checks. Every chat gets verified, no faking it.

Roll out real-time anomaly hunters. Machine learning watchdogs spot weird voice glitches or video glitches quick. Train your models against deepfakes from the start, throw variants at them to toughen up. And lock down comms with zero-trust: verify everything, trust nothing.

In code, grab PyTorch for those adversarial drills in training loops. Add end-to-end encryption everywhere. It works, boosting detection rates. Ditch quick fixes for upfront strength. Your multi-agent systems will handle deception like pros.

Everyday Habits to Lock Down Multi-Agent AI

Make security a habit in software engineering. Run vulnerability scans and pen tests often, fake deepfake hits every few months. Tools like Burp Suite dig into protocols.

Box agents in containers and microservices. Docker and Kubernetes keep breaches contained with strict network rules. Hook up SIEM for constant log watching, hunt threats on spikes in odd commands.

Train your team with hands-on deepfake demos. Talk real risks in the wild. Weave security into CI/CD: check models for injection flaws pre-launch.

This covers all bases against trust issues and more. Turn risks into routine wins.

What's Coming in AI Cybersecurity Threats?

Deepfakes go multimodal next, mixing voice, video, text to overwhelm defenses. Quantum threats loom, so grab quantum-safe encryption for machine learning pipes early.

Industry groups push shared standards for agent auth. Design AI ethically, verify from the ground up. Watch for AI-scaled attacks, auto-deepfakes, malware that rewrites agents. State actors already run deepfake scams.

Jump into consortia for security benchmarks. Leaders see the dangers. Test pilots in air-gapped setups. Balance bold innovation with smart caution.

Grab these ideas, software engineers. Fortify your multi-agent AI against deepfakes and whatever's next. Turn cybersecurity headaches into edges in artificial intelligence. What will you build first?

Related Articles

PAN-OS GlobalProtect CVE-2026-0257 Active Exploitation Steps for DevOps Teams

Edge Computing Strategies to Strengthen Cybersecurity in Web Development Apps

Hybrid Cloud Deployment Models for Banks Handling Regulated Data