Chroma Context-1: Open-Source 20B Search Agent Redefining Machine Learning Retrieval Speed

Break down Chroma’s Context-1, a 20B param agent 10x faster than rivals—Stanford-endorsed open-source powerhouse for machine learning apps and developer workflows.

Chroma Context-1: Open-Source 20B Search Agent Redefining Machine Learning Retrieval Speed

In machine learning, retrieval speeds just got a massive upgrade. Chroma's open-source Context-1, a 20B-parameter search agent, delivers frontier-level performance 10x faster and 25% cheaper. Developers, say goodbye to sluggish RAG pipelines.

We'll break down its architecture, training secrets, benchmark wins, a step-by-step integration guide, and cost tricks. All to help you supercharge AI tools and developer productivity in your machine learning projects.

What Is Chroma Context-1 and How Does It Work?

Chroma Context-1 pushes retrieval-augmented generation (RAG) systems to new heights. This 20-billion parameter open-source search agent, released as open weights according to LinkedIn posts from Charles Thayer, tackles complex multi-hop queries. It pulls supporting documents with pinpoint precision. Perfect for machine learning apps where speed and accuracy count.

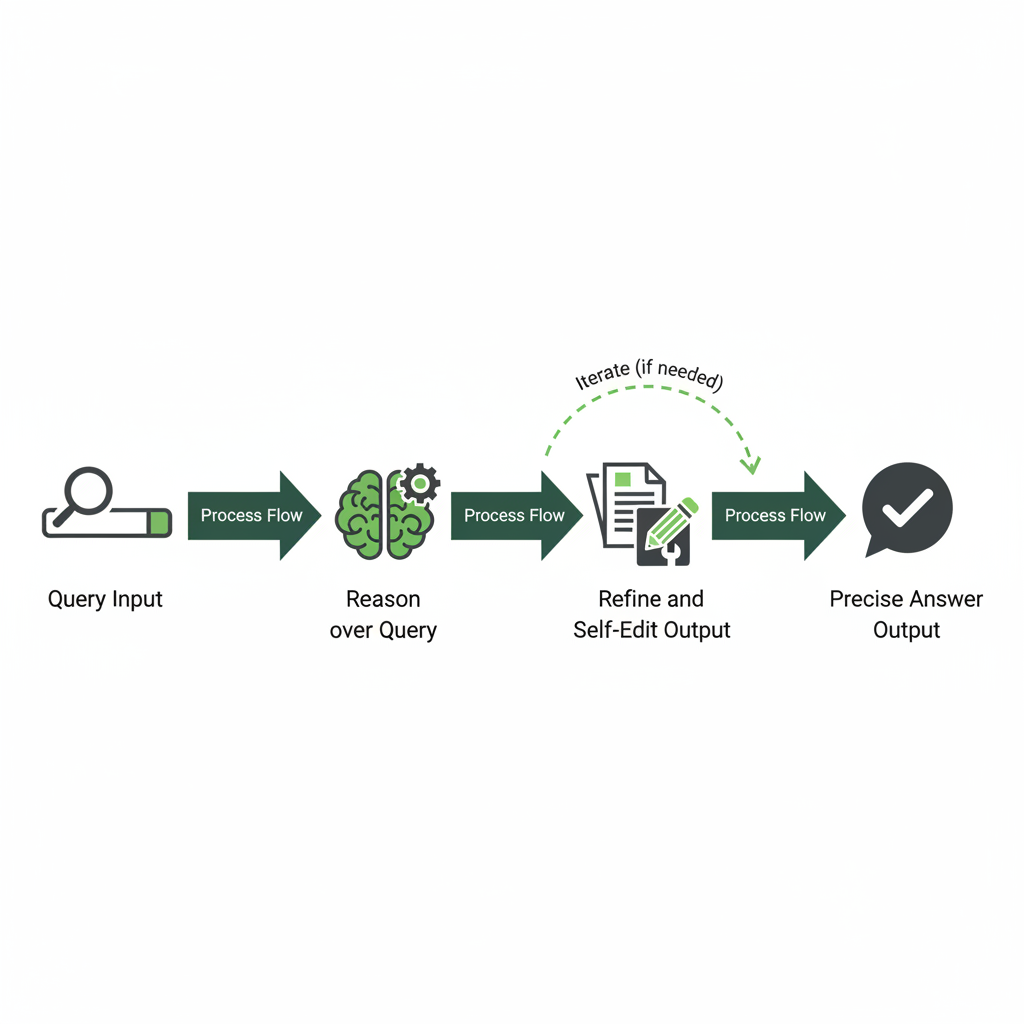

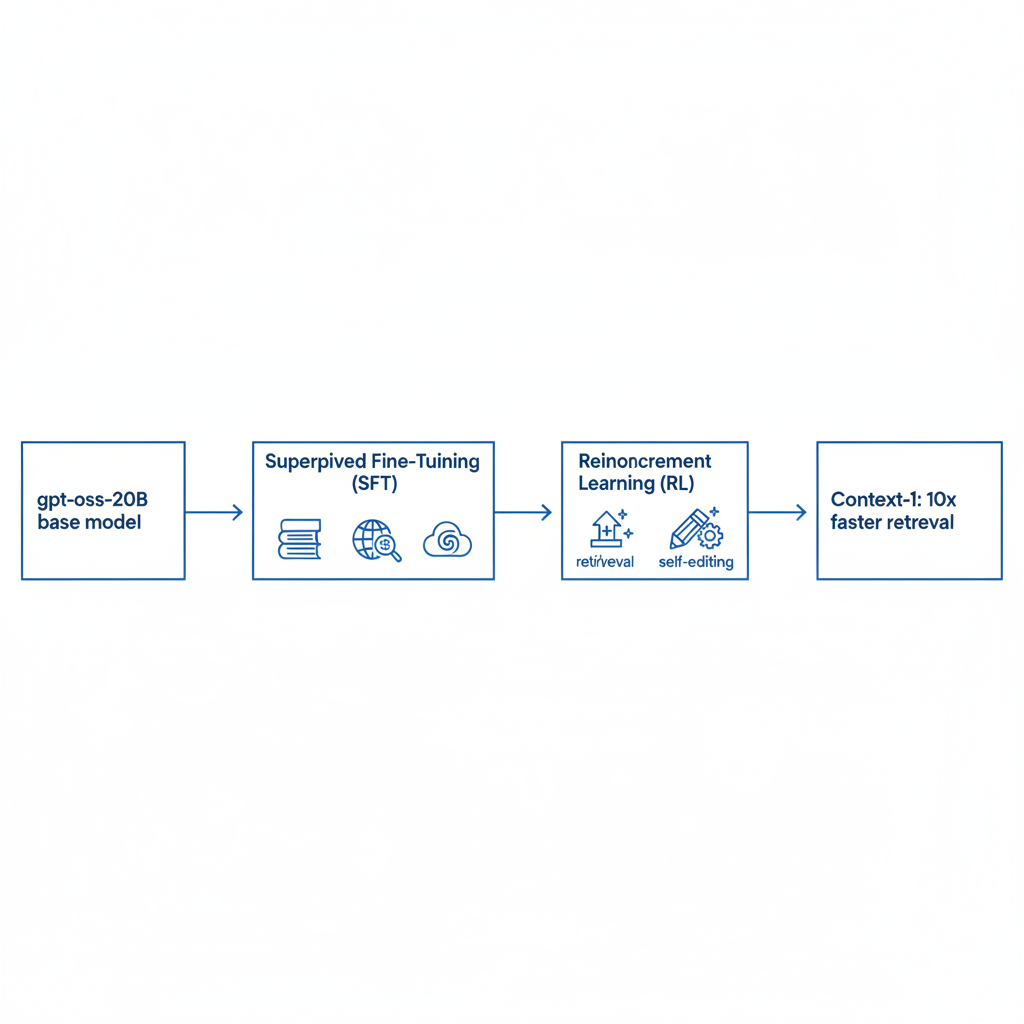

At heart, it's a search agent trained with Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) to enhance search capabilities. It reasons over your query and retrieves relevant context. From trychroma.com, it builds on the gpt-oss-20B base model, fine-tuned for search. Hugging Face highlights how it matches top LLMs in retrieval, but with real-world efficiency. Think faster knowledge bases or dynamic Q&A systems.

Unpacking Context-1's Architecture

The design kicks off with gpt-oss-20B, as detailed on trychroma.com. That base scales to 20 billion parameters without bloat. It shines in agentic search, handling multi-hop reasoning and document pulls in one go.

What makes it stand out? Balance. Hugging Face points out retrieval on par with frontier LLMs. Optimized for context in machine learning tasks, it cuts RAG bottlenecks. Open weights mean full access for tweaks. No black box. Production teams love the transparency.

The Training That Gives Context-1 Its Edge

Training took serious data at scale. Chroma even released their full data pipeline, per Charles Thayer on LinkedIn. They mixed Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL), says trychroma.com.

Supervised Fine-Tuning (SFT) and Reinforcement Learning (RL) were used to fine-tune gpt-oss-20B for search and enhance its retrieval performance. That's why it hits frontier retrieval speeds, up to 10x faster. Open pipeline lets you replicate or build on it. Community wins big in agentic search.

How Does Context-1 Stack Up Against Top Models?

It goes toe-to-toe with proprietary heavyweights. Retrieval matches frontier LLMs, per Hugging Face evals, but inference flies 10x faster. Trychroma.com calls it 10x overall, 25% cheaper too.

RAG benchmarks show latency crashes for big deployments. GPT-4o types drag on tough queries; Context-1 keeps accuracy while you wait less. Lower resources, same punch. Machine learning teams scaling AI tools? This fits perfect.

Key Benchmarks Reshaping Machine Learning Retrieval

Chroma dropped a fresh agentic search benchmark with Context-1, announced by Thayer on LinkedIn. It crushes on that and standard RAG tests: frontier-comparable retrieval, 10x speed, 25% cost savings, via trychroma.com and Hugging Face.

It achieves up to 10 times faster inference and 25% more cost-effectiveness. These metrics provide a clear yardstick for agentic search pipelines.

Integrating Context-1 to Skyrocket Developer Productivity

Jump in easy. Snag it from Hugging Face as open weights. Slot into RAG with LangChain or Haystack.

Basic steps:

- Install required dependencies.

- Load the open weights model from Hugging Face.

- Wire as retriever with its embeddings.

- Link to your LLM for QA or agents.

Those 20B params power multi-hop magic. Data pipeline helps fine-tune. Swap slow retrieval, watch productivity soar. In AI tools, this means quicker prototypes, happier devs.

Cost Savings and What It Means for the Tech Industry

Cloud inference drops 25%, say Hugging Face and trychroma.com. 20B params scale for enterprise without frontier-model bills. Open-source benchmark and pipeline level the field.

Future? Agentic search goes mainstream, not elite. Machine learning teams grab edges with fast, cheap retrieval in daily flows. Artificial intelligence gets practical.

Chroma Context-1 nails open-source innovation. It transforms machine learning retrieval: speed, savings, simplicity. Researchers, developers, give it a spin. See how it boosts your edge in the tech industry.

Related Articles

Alibaba's 2026 Study: Why 75% of AI Coding Agents Break Legacy Code in Software Development

Top 10 AI Tools for Content Creation in 2026

Prompt Engineering Revival: Boosting Developer Productivity in AI-Driven Software Development