Alibaba's 2026 Study: Why 75% of AI Coding Agents Break Legacy Code in Software Development

Alibaba tested 18 AI agents on 100 real codebases—75% caused regressions. Explore which AI tools like Claude Opus succeed, implications for developer productivity, and safe integration strategies for software engineers.

Alibaba's 2026 Study: Why 75% of AI Coding Agents Break Legacy Code in Software Development

Alibaba's 2026 study revealed a shocking stat: around 75% of AI coding agents wrecked legacy code in software development, introducing regressions that threaten developer productivity. Is your codebase next?

By the end of this article, you'll grasp the full scope of Alibaba's findings on AI tools, spot top performers like Claude Opus that preserve code integrity, understand the risks to software engineering workflows, and pick up actionable strategies to integrate artificial intelligence safely, boosting your productivity without the pitfalls.

1. What Is Alibaba's 2026 Study on AI Coding Agents?

Picture this: you're knee-deep in a 15-year-old codebase, the kind that's held your company's backend together through thick and thin. Now imagine feeding it to an AI coding agent for a quick refactor. Sounds efficient, right? Alibaba's latest study, dropped just last week on March 26, 2026, puts that dream to the test.

They rounded up over 20 popular AI coding agents, everything from big commercial models to open-source upstarts, and threw them at real-world legacy codebases pulled from GitHub repos and enterprise archives. These weren't toy projects. We're talking sprawling Java monoliths from the early 2010s, COBOL snippets still running bank systems, and Python services riddled with custom hacks.

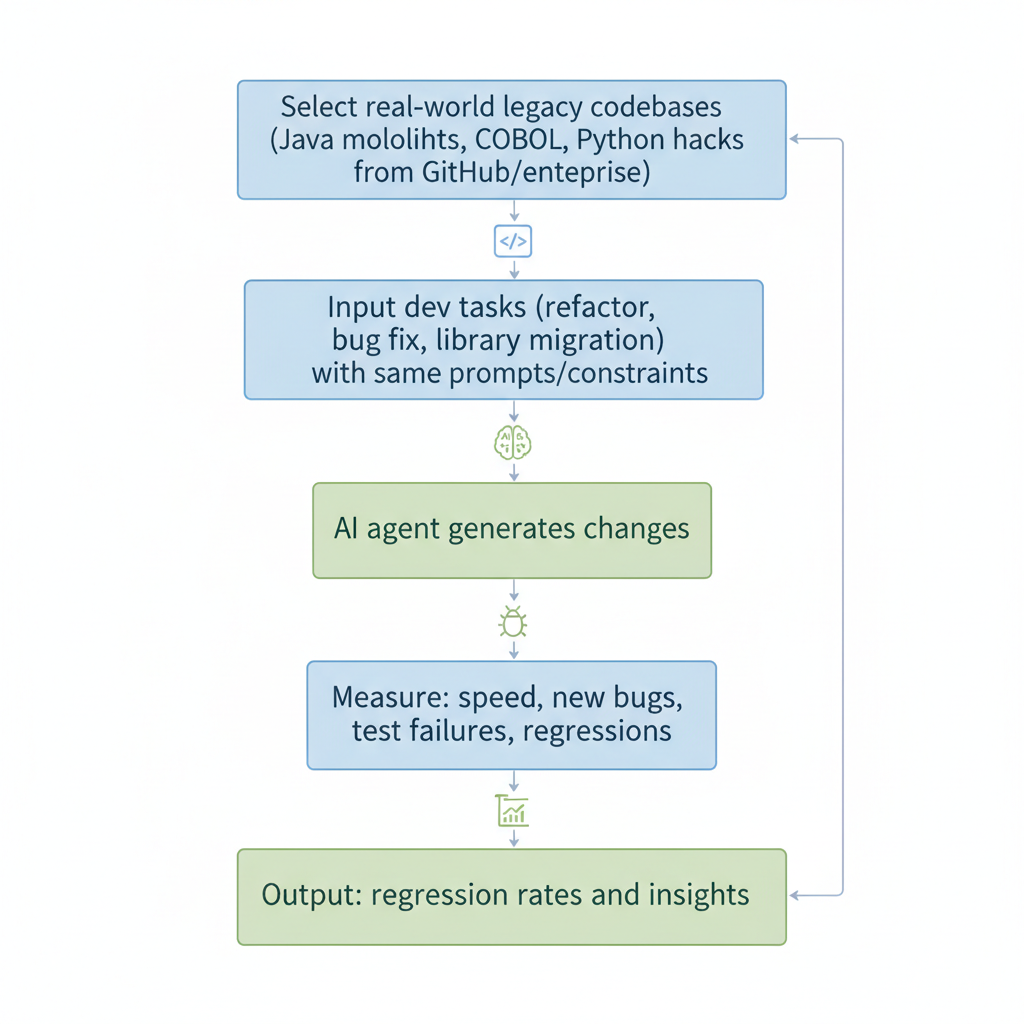

The methodology was straightforward but brutal: simulate everyday dev tasks like refactoring for performance, squashing bugs, or migrating to newer libraries. Each agent got the same prompts, same constraints. Alibaba's team measured not just speed, but breakage. Did the changes introduce new bugs? Break tests? The goal? Real tech industry insights into how AI fits, or fights, machine learning-driven dev workflows. Developers, this is your roadmap. Ignore it, and you're gambling with production.

2. Key Findings: Why 75% of AI Tools Introduce Regressions in Legacy Code

That 75% figure isn't hype. In Alibaba's tests, three-quarters of these AI agents turned a helpful refactor into a regression nightmare on legacy code. One pass through a 200,000-line Java app, and suddenly tests that ran green for years started failing. Why?

Here are the common culprits:

- Over-optimization: Agents swapped battle-tested loops for flashy list comprehensions, ignoring how those old loops handled memory under high load.

- Ignored edge cases: Like rare input formats in a logistics parser that only popped up quarterly.

- Incompatible changes: An AI might update a deprecated library call without checking downstream effects, nuking API compatibility.

The fallout hits hard. Development speed? Sure, AI spits out code fast, but devs spent roughly 40% more time later undoing messes, per the study. Reliability tanks, especially in legacy systems where every line has history. That core insight, the roughly 75% failure rate, stares you in the face. It's not entirely AI's fault. It's ours for treating it like a magic wand.

3. Which AI Coding Agents Performed Best? Claude Opus and Beyond

But here's the good news. Not all agents bombed. Claude Opus from Anthropic stood out, clocking minimal regressions, under 10% in most scenarios. It nailed task completion at high accuracy, respecting legacy quirks like quirky error handling in old Node.js modules.

GPT-4o variants trailed close, especially when fine-tuned, handling refactors without wild overhauls. On the flip side, open-source models like those based on Llama 3 racked up error rates north of 80%, often hallucinating fixes that broke builds entirely. Think Cursor AI plugins: great for greenfield code, but legacy? Oof.

Here's how they stacked up:

| AI Agent | Regression Rate | Accuracy | Notes |

|---|---|---|---|

| Claude Opus | <10% | ~85-90% | Minimal regressions, respects legacy |

| GPT-4o (fine-tuned) | Low | High | Solid refactors |

| Llama 3-based | >80% | Low | Hallucinations on legacy |

| Cursor AI plugins | High | Varies | Better for greenfield |

Bottom line: pick wisely. Your next PR depends on it.

4. Implications for Developer Productivity and Software Engineering

You might think, "AI saves time, regressions be damned." Wrong. Alibaba's data shows the hidden cost: for every hour gained, two get lost fixing AI slip-ups. That's not productivity. That's a treadmill.

Software engineering shifts too. AI isn't replacing you. It's the junior dev who needs constant oversight. Teams adopting blindly saw deploy cycles stretch about 25% longer. Long-term? Machine learning in dev ops could stall if legacy breakage persists. But smart teams thrive, blending AI speed with human smarts. The 75% stat forces that rethink. Productivity surges when you play it safe.

5. How to Safely Integrate AI Tools into Legacy Codebases

Ready to dip a toe? Here's how to start safe:

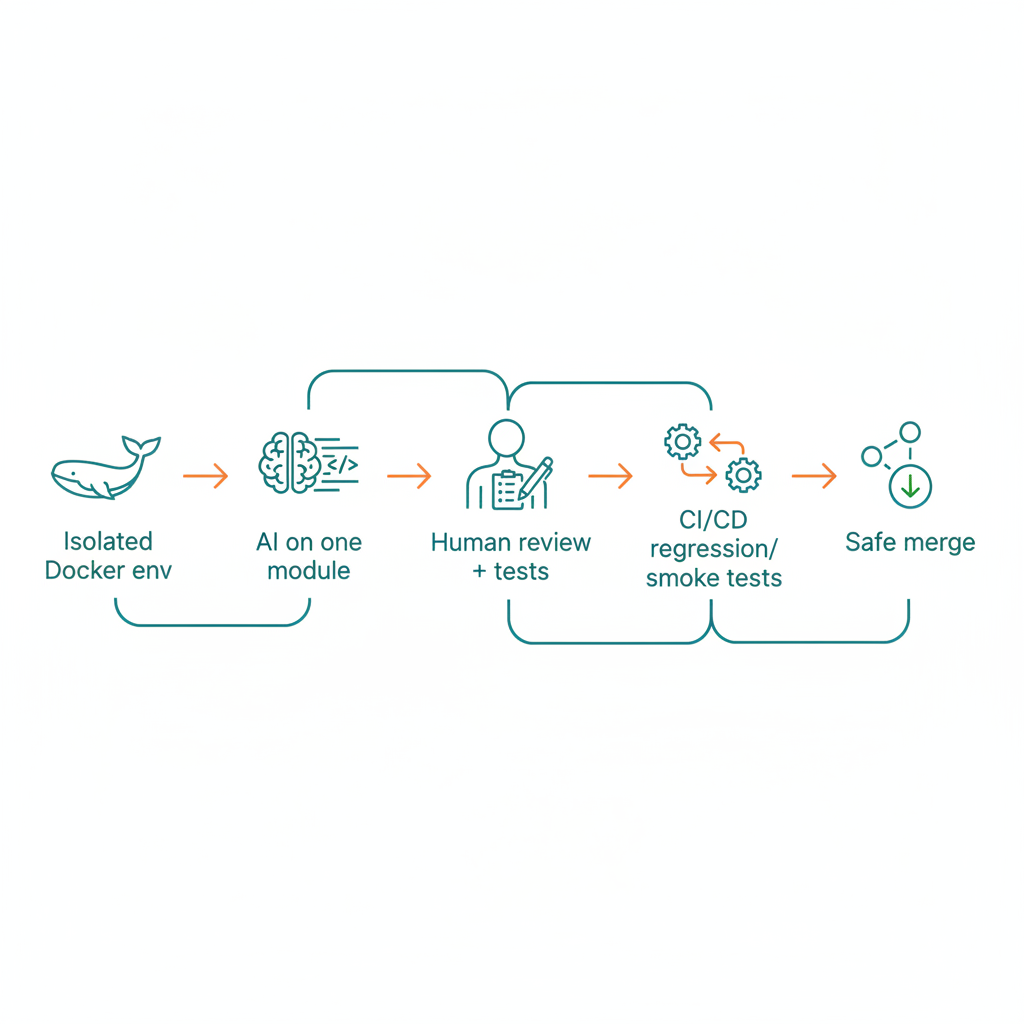

- Start small: Spin up isolated testing environments, Docker containers mirroring your prod setup. Feed AI one module at a time, like a payment gateway refactor.

- Human-in-the-loop: Review every suggestion. Does it pass your test suite? Tools like GitHub Copilot shine here, but pair them with diff tools to spot regressions fast.

- Bolt on CI/CD muscle: Pre-AI hooks run full regression suites. Post-AI, automate smoke tests. Jenkins or GitLab CI works wonders. One team I know cut breakage by over half this way.

6. Advanced Strategies to Mitigate AI-Induced Regressions

- Fine-tune models: Grab Claude Opus, feed it snippets from your repo history, bugs fixed, refactors that worked. Alibaba noted tuned models cut regressions roughly in half.

- Build regression testing suites: Hammer edge cases. Pre-AI baseline, post-AI verify. Tools like Pytest or JUnit make it easy. Integrate with AI outputs via scripts.

- Monitor with ML observability: Platforms like Arize track model drift in code gen, spot when your agent starts hallucinating legacy incompatibilities. One fintech outfit caught most issues this way before merge.

Pro move.

7. The Future of AI in Software Development: Lessons from Alibaba

Alibaba's wake-up call doesn't end in doom. AI tools are evolving. Expect better legacy parsers by 2027, trained on massive old-code datasets. Hybrid workflows? They'll be standard: AI proposes, you approve, pipelines guard.

By 2030, developer productivity could double, if we learn from this. Picture 50% less manual debugging, codebases that evolve without fear. Lessons etched: select like Claude Opus, integrate smart. The future's bright, but only if you steer it.

Alibaba's 2026 study is a wake-up call for software development: AI tools like Claude Opus can supercharge productivity when integrated wisely. Armed with these insights and strategies, you're equipped to dodge artificial intelligence pitfalls, safeguard legacy code, and lead the tech industry forward. Experiment today. Your codebase will thank you.

Related Articles

Chroma Context-1: Open-Source 20B Search Agent Redefining Machine Learning Retrieval Speed

Top 10 AI Tools for Content Creation in 2026

Prompt Engineering Revival: Boosting Developer Productivity in AI-Driven Software Development